Testing the Elo Rating System

Introduction

This is the third article the series about the Elo rating system.

In the first article we focused on understanding the math, the second article we wrote code to use the system and in this article we’ll be looking at testing the system.

As you may have seen in the code repository, we already have a set of unit tests that verify the basic functionality such as “given player A has EQUAL rating as player B, probability or expected score should be 0.5” and you might be asking “What is different about this additional type of testing?”

In these types of tests we are trying to look at the system as whole and analyze how it operates at scale over time. Essentially we want to build a simulator that allows us to create players and have them play games. We’ll be able to adjust how many players exists and how many games they play. We can then look at the results for unexpected behavior. Examples might be abnormally high or low ratings due to some incorrect calculations or players consistently having abnormal results like always trending towards the lower or higher rating. This testing will give us even stronger confidence that if the library is deployed it will be stable and won’t cause some catastrophic behavior that would require a data reset.

Building the simulator also has the effect of forcing us to better understand the experience of being a consumer of this rating system library. We will be able to more closely compare what I had defined in the last article as a “symmetric” and “asymmetric” rating systems in which the former is standard and the latter has certain types of players with different K values. I had theorized this could work but want to see in practice.

The basic structure of the simulator:

Setup

- Create rating system

- Create players

Execute Simulation

3. For each player, play N amount of games

- 3.1 — For each game

- 3.2 — Find appropriate contender

- 3.3 — Simulate a game, record the result, and update each players rating

Save the Results

4. Convert results to CSV file for viewing

As I mentioned above, we will actually have two simulators . One for the symmetric and asymmetric systems so we can compare. In order to compare them, we want to initialize the players to the same values such as initial ratings and then see how they diverge overtime. Essentially this means we’ll be duplicating each step above for the different sets of players (the symmetric and asymmetric).

All of the code we’ll be going through can be found here:

Rating System Simulator

1. Creating the Systems

We’ll setup the systems and show how one might create an asymmetric system as their wasn’t much time spent on it in the other articles other than knowing it could be possible. For the symmetric system we use all the defaults and can simply call createRatingSystem() ; however, for the asymmetric system we must provide a custom K factor function that changes the K value depending on the type of player. See we use playerIndex and to change the initial value from between 32 or 4. There is also some scaling to lower the K value as the players get better based on of the suggestion in the wiki articles. I could imagine a more sophisticated function that took in the number of games played as well because we could use this to make ratings become more stable over time.

2. Create the Players

Next we’ll create the players. If you remember the particular application I’m intending to use this asymmetric rating system in will be a type of online game where users answer questions. These users and questions will be represented as “players” whose ratings will change over time.

In an actual real system I believe all players would be given and initial rating based on the parameters of the Elo system. For example, with the conventional scale factor of 400 and K factor of 32, the expected skill range of players is about ~1000 to ~5000. In this case, I believe most systems start users at 1000. Although, I’ve heard of others that use 1400. This would be a simple fixed value that we could assign.

However, for our testing we want to see how the system behaves for different users that span the expected rating range to give us the most assurance that is is operating correctly. We’re going to create tiers of users. For example starting at the initial rating and increase by a set amount until we’ve gone through each tier. E.g. 1000, 1500, 2000, 2500, etc…

For the questions the process will be very similar but we will create many more with more tiers to better similar the exposure of a single player to multiple questions as they continue to play.

3. Execute Simulation

As outlined in the original pseudo code we will go through each player, find an opponent which is a question within a specified range of that players rating, and play a game, and record the results for later analysis.

The looping through players and recording results is standard so we’ll skip to the interesting parts.

Getting an appropriate question for the player:

In a real production system you could imagine we have a database of thousands of questions to pick from. We don’t have to pick a question that is too difficult because the player would likely never answer it correctly and become frustrated. We don’t want to pick a question that’s too easy because the player will not be challenged. They maybe become bored and not return to play more.

We will disregard the technicalities of a production system and in our testing we have the ability to iterate over all questions to find an appropriate match. We will find a random question within an acceptable range of ratings. In words, given a player of rating R find a question with rating in range [R-X, R+X].

Computing the Expected Score and Actual Score

For expected score we use the (expected probability > 0.5). For actual score we use a random value < player probability. This effectively biases the 1 or 0 based on how likely the player is to win. For example, if the player probability is 0.73, the equation Math.random() < 0.73 ? 1 : 0 would mean there is a 73% chance the random value chosen in range [0,1] will be less than 0.73. Given there is still a possibility for the random value to be greater this can represent the ability for a player to win then they are expected to lose or vice versa.

Putting it all together

Now we have a player, question, and player score we can get the next rating information.

You can see the parts about saving the results and updating the player and question ratings.

Here’s how we could call the simulateGames function:

After the simulation we have a list of results of each game. We want to look closer at to analyze the change in ratings overtime. We could simply have a list of ratings, but only seeing that number without the context of the players data would not be very helpful. Let’s create a .csv file so we can view the data in Excel.

4. Convert the Results to CSV

Remember a Comma Separated Value (CSV) file should be formatted as follows:

header1,header2,header3

value11,value12,value13

value21,value22,value23

value31,value32,value33Given a list of objects we will create a string that represents the CSV then write it to a file

await fs.writeFile(fileName, csvString, ‘utf8’)

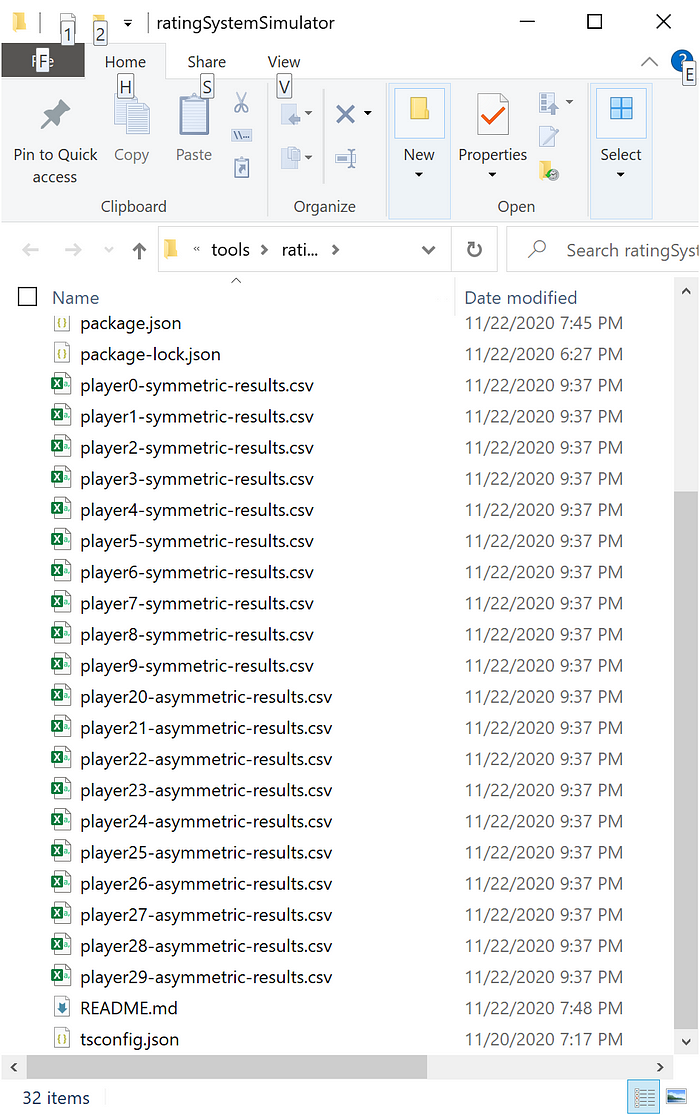

After we run the script it will generate a file for each player of each system:

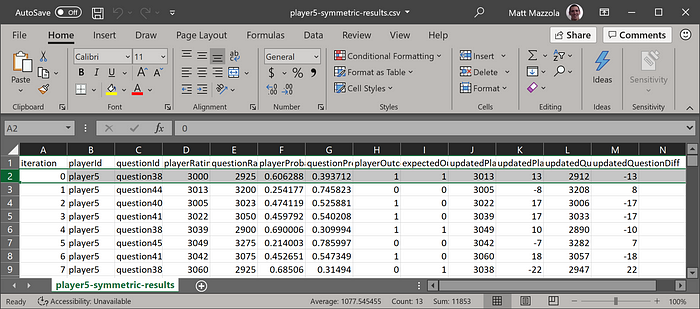

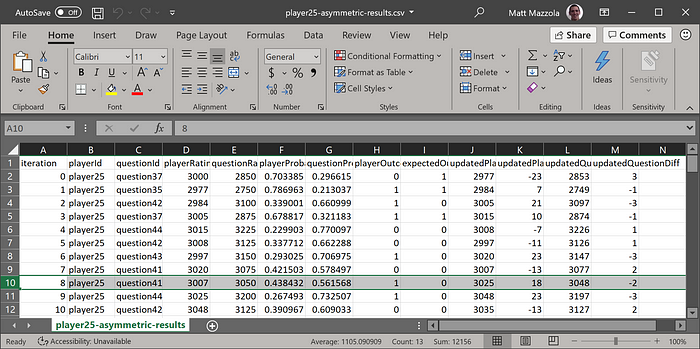

If we view one of those results in Excel we can see in the symmetric there are equal changes for the players and questions. For example +13, -13. However, with the asymmetric system se see players receiving different results such as -18, 2 In this case the player rating will change a lot while the question rating changes very little. This is because the question K value is so much smaller (4 vs 32)

Conclusion

In this article we looked at how to write a simulator to test and observe behavior of the Elo rating system we developed in the previous article.

I think the biggest question I still have and next step to putting this into production is how to build a proper matching function at scale when you can’t iterate through all players. Perhaps that will be the topic of a future article.

This was less abstract and technical that the previous articles but hopefully you still found it valuable and learned something. Let me know what you think!